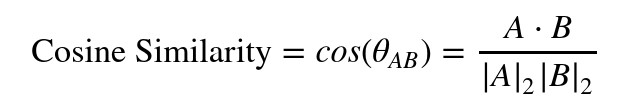

Cosine similarity formula4/28/2023

It gives me a bigger distance between the photos with myself than between photos of other people. What other metric I could use? I have also tried using Euclidean distance but works even worse than cosine. Here you can see an example of an output after the block3_pool/MaxPool layer for both pictures. It would even give me a higher score for different persons than for the picture with myself compared to the same picture but mirrored. I have tried changing the layer from which I extract the activation map (after each max pool layer) but I can get only 0.5 for the same person but mirrored. I have tried using cosine similarity between these two feature vectors but it gives me a score of only 0.3 similarity. That’s where Cosine Similarity comes into the picture. I gave as an input a picture of myself and the same picture but mirrored. Cosine Similarity Although knowing the angle will tell you how similar the texts are, it’s better to have a value between 0 and 1. What I have tried so far: I have extracted two feature vectors using the VGG19 pretrained network. The application should be able to output a high similarity score for the same person and a lower score if the people in the photos are different. I am looking for a way to compare the feature vectors of two images that contain a person in it. 483: 147-155 doi: 10.1016/j.jembe.2016.07.010 which incidentally contains an error as 2 figures are swapped, but it illustrates the idea. See Clarke KR, Somerfield PJ, Gorley RN (2016) Clustering in non-parametric multivariate analyses. If the value is high (near 1) the clustering result is an excellent representation of the original distances, if it is <<1 then it is not. The between-sample original resemblances are correlated with the cophenetic distances to give cophenetic correlation. The distances among samples are calculated through the dendrogram (actually to a common node, but the idea holds) to give cophenetic distances. So, as an example, similarities among samples are clustered using a method like UPGMA to produce a dendrogram. Cophenetic correlation is a measure of how well the clustering result matches the original resemblances. To prepare for this method, we have to normalize the item ratings based on the users’ average rating. It uses those resemblances to produce a result, be it a dendrogram or some other result. We will calculate this using adjusted cosine similarity function. With Elasticsearch's highly distributed architecture, you can implement an enterprise grade cosine similarity based search engine with high recall and performance.A clustering method operates on some measure of resemblance (similarity/dissimilarity/distance) among objects. The results from k-NN search with cosine similarity can be further improved in precision, by leveraging Elasticsearch's post processing features like aggregations and filtering. For example, if you use bag-of-words to compare two documents that differ greatly in length yet the most frequent word in both is “pet”, which appears 300 times in the larger document and 75 times in the other, the Euclidean distance between these documents can be large due to different scales while the documents can be considered similar by cosine similarity due to the common orientation in their content. Cosine similarity formula explained in easy words Cosine similarity is a measure of similarity between two non-zero vectors that measures the cosine of the angle between them. With cosine similarity, you can now measure the orientation between two vectors. Cosine similarity measures the cosine of the angle between two vectors in the same direction where smaller cosine angle denotes higher similarity between the vectors. The initial release of k-NN used Euclidean distance to measure similarity between vectors. We released k-NN similarity search feature in Amazon Elasticsearch Service that runs nearest neighbor search on billions of documents, represented by vectors, across thousands of dimensions.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed